我想要一天分享一點「LLM從底層堆疊的技術」,並且每篇文章長度控制在三分鐘以內,讓大家不會壓力太大,但是又能夠每天成長一點。

Unigram 語言模型分詞由 Google 開發,它使用 Subword 單元進行訓練,並會丟棄不常見的單元,Unigram 語言模型 Tokenization 是隨機的,因此對同一輸入不一定會產生相同的 Tokenization 結果,相反地,Byte Pair Encoding (BPE) 是非隨機的,對於相同的輸入總是會產生相同的輸出 Tokenization 結果。

以下示範,首先載入必要依賴包:

from tokenizers import Tokenizer

from tokenizers.models import Unigram

from tokenizers.trainers import UnigramTrainer

from tokenizers.pre_tokenizers import Whitespace

接著輸入範例文本:

corpus = [ "Subword tokenizers break text sequences into subwords.",

"This sentence is another part of the corpus.",

"Tokenization is the process of breaking text down into smaller units.",

"These smaller units can be words, subwords, or even individual characters.",

"Transformer models often use subword tokenization." ]

再進行 Tokenizer 設定並進行訓練:

tokenizer = Tokenizer(Unigram([]))

tokenizer.pre_tokenizer = Whitespace()

trainer = UnigramTrainer(vocab_size = 5000)

tokenizer.train_from_iterator(corpus, trainer)

接著檢視結果:

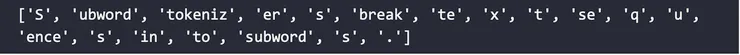

output = tokenizer.encode("Subword tokenizers break text sequences into subwords.")

print(output.tokens)

結果為: