我想要一天分享一點「LLM從底層堆疊的技術」,並且每篇文章長度控制在三分鐘以內,讓大家不會壓力太大,但是又能夠每天成長一點。

藉由 HuggingFace 使用 Meta Llama 模型的執行方法為:

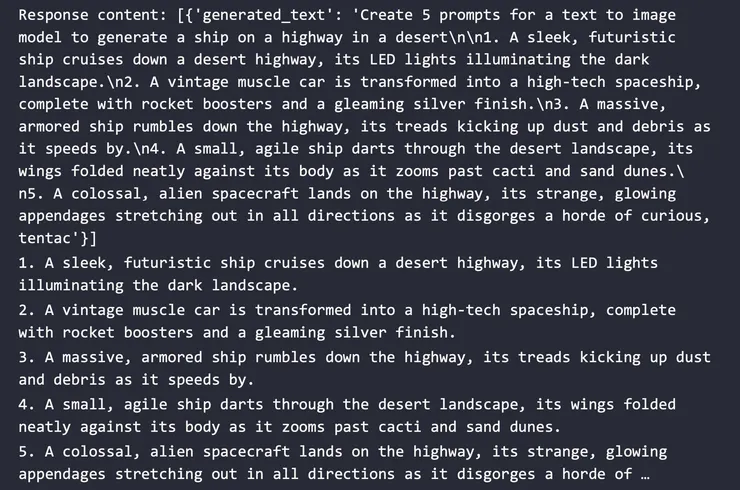

prompt = 'Create 5 prompts for a text to image model to generate a ship on a highway in a desert\n'

response = LLaMA2(prompt)

print("Response content:", response)

if isinstance(response, list) and len(response) > 0:

if 'generated_text' in response[0]:

sequences = [{'generated_text': response[0]['generated_text']}]

else:

print("generated_text not in response[0]")

else:

print("Response is not list-like or is empty")

text_content = sequences[0]['generated_text']

lines = text_content.split('\n')

prompts = [line for line in lines if line.startswith(('1. ', '2. ', '3. ', '4. ', '5. '))]

for prompt in prompts:

print(prompt)

結果為: